Videos

Shotmaker Experiments - The Daydreamer Evolution

A sequence of experiments exploring how structured 3D scenes, AI feedback, and music-driven parameter changes interact to produce evolving visual behavior.

These videos are grouped by capability — from basic structure preservation to physics interaction and beat-synchronized hallucination.

The original goal of Shotmaker was to build a repeatable AI filmmaking process using structured 3D scene data from game engines.

But generative AI does something unusual. It hallucinates new details — shapes, textures, and motion that were never explicitly programmed. Normally those hallucinations flicker and disappear from frame to frame.

In this system — now called Daydreamer — a feedback mechanism allows the model to remember what it invented and carry those ideas forward. You can watch it explore and play with its own creations over time.

The visual shifts are not random. They are driven by the music.

On each beat, rendering parameters like denoising, CFG, and other weights are dynamically adjusted. It is similar to shaking an Etch-A-Sketch on the downbeat — the system clears space and invents something new, then builds on it in the next moments.

These experiments are both artistic exploration and technical research into how controllable AI video systems might evolve.

1. Structure — The System Learns the Scene

These experiments demonstrate how the renderer begins with a stable 3D foundation from Unity and gradually introduces hallucinated detail as parameters increase.

The key question explored here:

How much creative variation can be introduced while preserving spatial structure?

2. Style — Portrait Mode and Composition

These experiments explore vertical composition designed for mobile viewing and social media formats.

The renderer focuses on character presence while maintaining environmental context.

3. Energy — Music Makes It Move

These experiments synchronize rendering behavior to musical structure and sound energy.

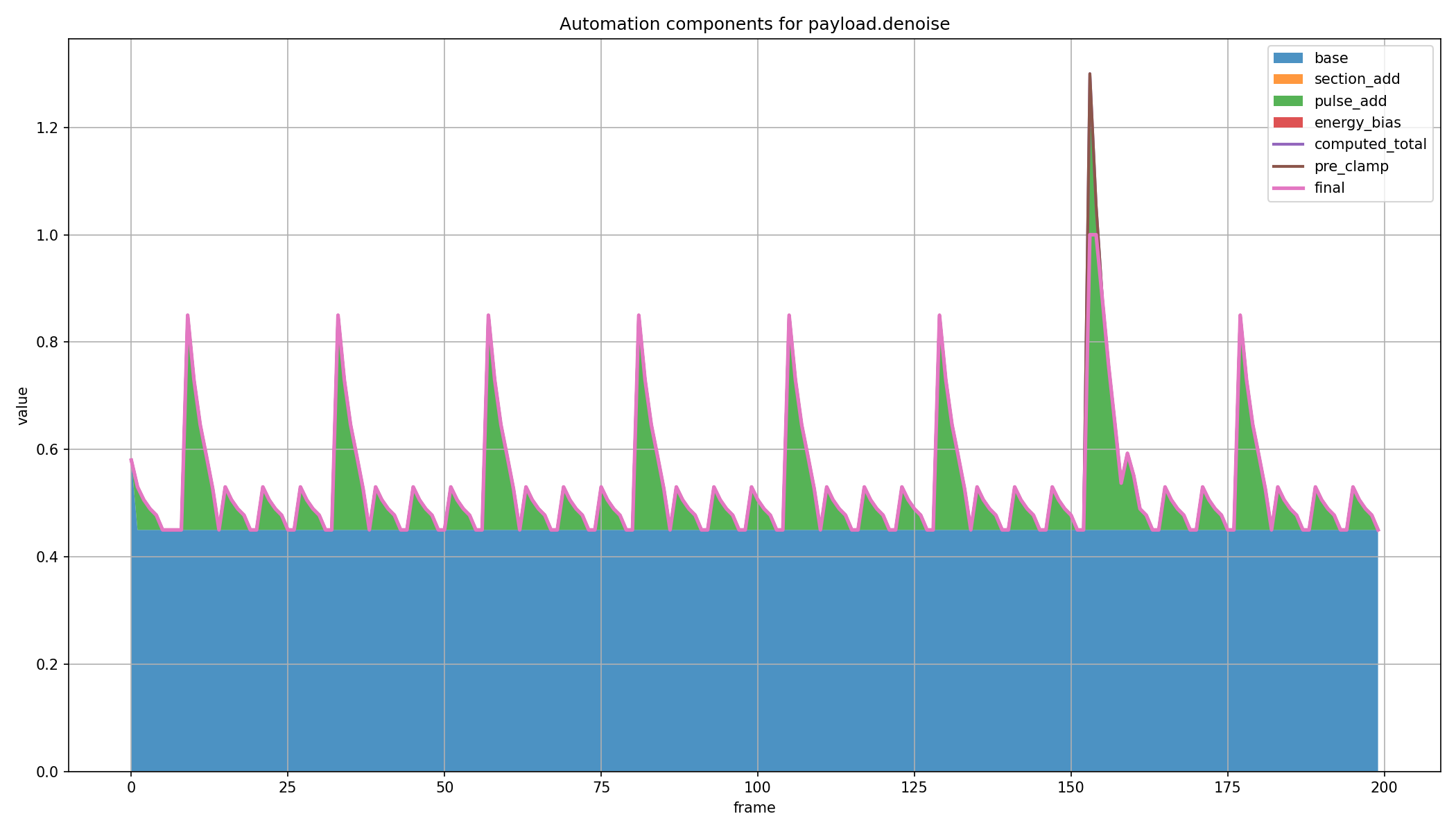

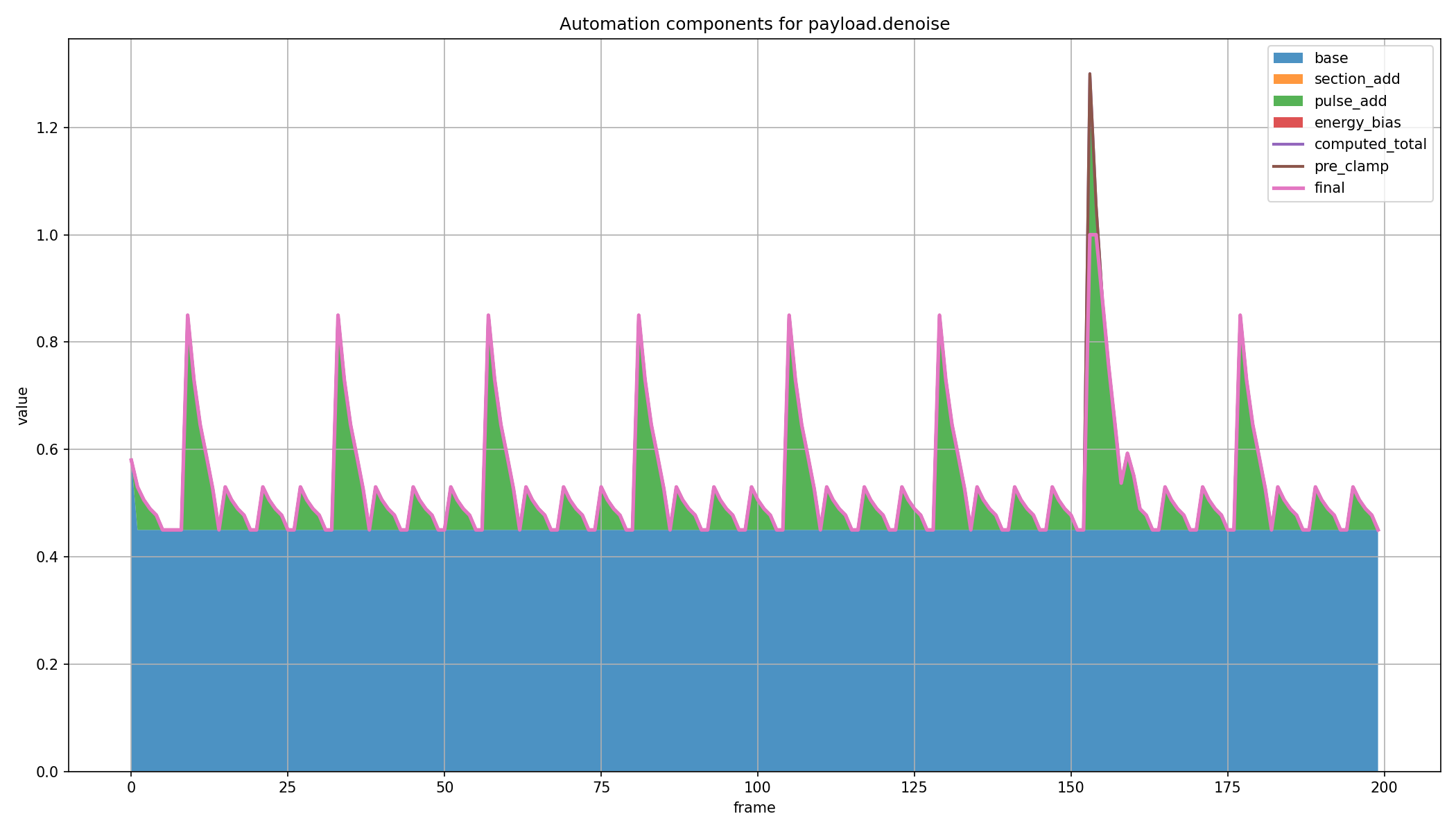

Instead of using fixed render settings, the system automates denoising and other parameters across time. Downbeats create larger pulses, intermediate beats create smaller pulses, and additional energy-driven bias can push the renderer harder when the music intensifies.

In the plot below, the denoising curve is built from several components: a base value, beat-driven pulse additions, and an experimental override in measure 7 that deliberately drives the system into a stronger hallucination event.

4. Physics — Interaction with Real Motion

These experiments test how the renderer behaves when interacting with simulated physical objects.

The goal is to preserve motion consistency while still allowing creative hallucination.

5. How It Works — Inside the System

This video shows the internal signals driving the renderer.

Unity frames, control maps, and parameter changes are displayed in real time, revealing how structure, motion, and music interact to produce the final result.